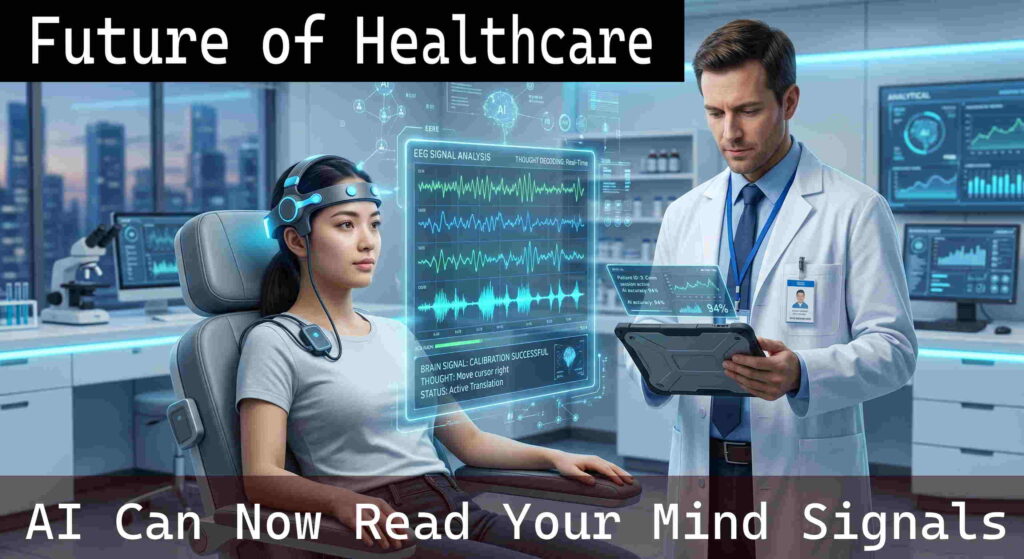

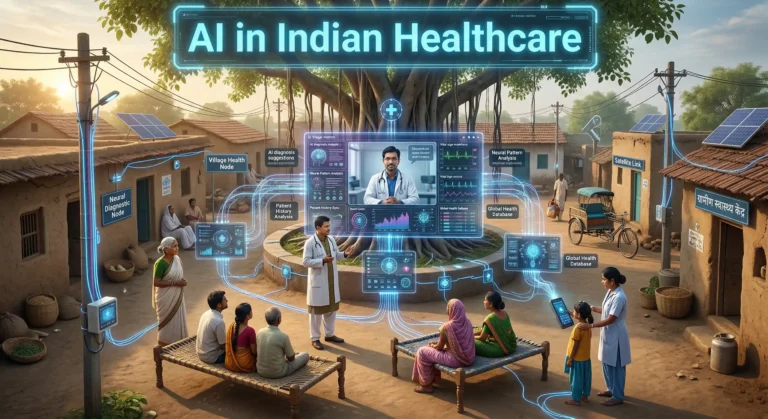

Introduction: The Dawn of Mind-Reading Medicine

Imagine being trapped inside your own body. You are fully awake, your thoughts are crystal clear, and you have so much to say to the people you love. But due to a severe injury or illness, you cannot move a single muscle or utter a single word. For decades, this devastating condition, known as “locked-in syndrome,” was viewed as an unbeatable medical wall. But today, that wall is breaking. Technology has found another path, and it is not physical, it is digital.

Artificial Intelligence (AI) has reached a stage where it can interpret the electrical signals of the human brain. By acting like a translator, AI converts silent thoughts of paralyzed patients into text on a screen, spoken words from a device, and even movement in robotic limbs.

This is no longer a concept from movies. It is already happening in research labs and hospitals worldwide. AI is opening access to the human mind, and in doing so, it is reshaping healthcare, rehabilitation, and communication. In this detailed guide, we will understand how AI reads brain signals, the technology behind it, the risks involved, and what it means for the future as we move toward 2026 and beyond.

→ Read: AI Detects Diseases Before Symptoms – Predictive Healthcare

Basic Concepts: How Do Brain Signals Work?

To understand how AI reads the mind, we first need to understand how the brain communicates. The brain contains billions of neurons. Every thought, movement, or emotion happens when these neurons send tiny electrical signals to each other.

Think of your brain like a massive stadium filled with people. When you decide to move your hand, a specific group in that stadium becomes active. This activity creates electrical signals. Sensors placed on the head can capture this activity.

However, the signals are not clean. Many brain activities happen at the same time, such as breathing, seeing, and hearing. This makes it difficult to isolate one specific thought. Earlier, technologies like EEG could record signals, but understanding them was extremely difficult. This is where AI plays a major role.

Core Explanation: AI as the Ultimate Translator

The real breakthrough is not just hardware but software. Brain-Computer Interfaces (BCIs) combined with Deep Learning AI make this possible.

A Brain-Computer Interface acts as a bridge between the brain and a computer. The AI system then analyzes the data. Deep Learning is designed to detect patterns in large and complex data.

When a person thinks about a word like “Hello,” the system records the brain signals. AI studies these signals and compares them with previously learned patterns. Over time, it learns which pattern represents that word.

Once trained, the AI can instantly recognize the same pattern and convert it into text or speech. This is how a thought becomes a digital output.

→ Read: AI Predicting Your Next Move – Machine Learning Explained

How It Works: The Step-by-Step Process

Converting thoughts into actions happens very quickly. Here is a simple breakdown:

Step 1: Signal Acquisition (Listening to the Brain)

Sensors capture brain activity using devices like EEG caps or implanted electrodes.

Step 2: Signal Pre-Processing (Cleaning the Noise)

Unwanted signals caused by blinking or muscle movement are removed to get clean data.

Step 3: Feature Extraction (Finding the Clues)

AI identifies key patterns in the signals, such as frequency or intensity.

Step 4: Classification (Decoding the Thought)

The AI predicts what the signal means based on its training.

Step 5: Output and Action (Making It Real)

The decoded signal is converted into an action, like typing text or moving a robotic arm.

Types / Components of Brain-Computer Interfaces

BCIs are classified into three main types based on how they connect to the brain.

1. Non-Invasive Systems

These use external devices like EEG caps. They are safe but provide weaker signals.

2. Semi-Invasive Systems (ECoG)

Electrodes are placed on the brain surface. Signal quality improves without deep penetration.

3. Invasive Systems (Intracortical)

Electrodes are implanted inside the brain. This gives very accurate data but involves risk.

→ Read: AI Glasses That Can See, Hear and Think

Features and Benefits of AI Mind-Reading in Healthcare

- Restoring Communication: Patients who cannot speak can communicate using AI systems.

- Regaining Physical Mobility: Brain signals can control robotic limbs or exoskeletons.

- Faster Recovery: AI helps improve rehabilitation by monitoring brain activity.

- Mental Health Support: AI can detect patterns linked to stress, seizures, or depression.

Real-world Use Cases: Changing Lives Today

The “Digital Avatar” for Stroke Victims

Patients who lost speech ability are now able to communicate using AI-generated voice systems.

Neuralink and Computer Control

Patients can control computers and play games using only their thoughts.

Mind-Controlled Prosthetics in Amputees

AI allows amputees to control artificial limbs naturally through brain signals.

→ Read: How AI Is Helping Small Businesses Grow

Comparison Table: Invasive vs. Non-Invasive BCI Systems

Here is a comparison between major types of BCIs:

| Feature | Non-Invasive (EEG Caps) | Invasive (Brain Implants) |

|---|---|---|

| Surgery Required | No | Yes |

| Signal Quality | Low | Very High |

| Speed | Moderate | Fast |

| Risk | Minimal | High |

| Use Case | Basic tasks | Advanced control |

Security, Risks, and Ethical Challenges

1. Neuro-Privacy

Brain data is extremely personal. There are concerns about misuse by companies.

2. Brain Hacking

Devices connected to the internet may face cyber threats.

3. Physical Risks

Implants may cause infection or complications.

4. Accessibility Gap

This technology is expensive and may not be available to everyone.

→ Read: How AI Uses Your Data Without You Knowing

Best Practices: Regulating the Future of Mind Tech

Strong rules are needed to protect users.

Encryption, local processing, and strict laws can help protect brain data.

Systems should also include emergency control options.

Advanced Concepts: The Closed-Loop System

Future systems will not just read signals but also send feedback to the brain.

This allows patients to feel sensations through robotic limbs.

Future Trends: What to Expect in 2026 and Beyond

Non-invasive consumer devices will become more common.

Healthcare will use AI for faster recovery and therapy.

Surgeries will become safer with robotic assistance.

→ Read: AI Is Quietly Changing Life in India

Conclusion: A New Era of Human Potential

AI reading brain signals is one of the biggest advancements in healthcare.

It is helping people regain communication and movement.

At the same time, privacy and ethics must be protected.

The future of healthcare is evolving rapidly, and it is becoming more connected to the human mind than ever before.