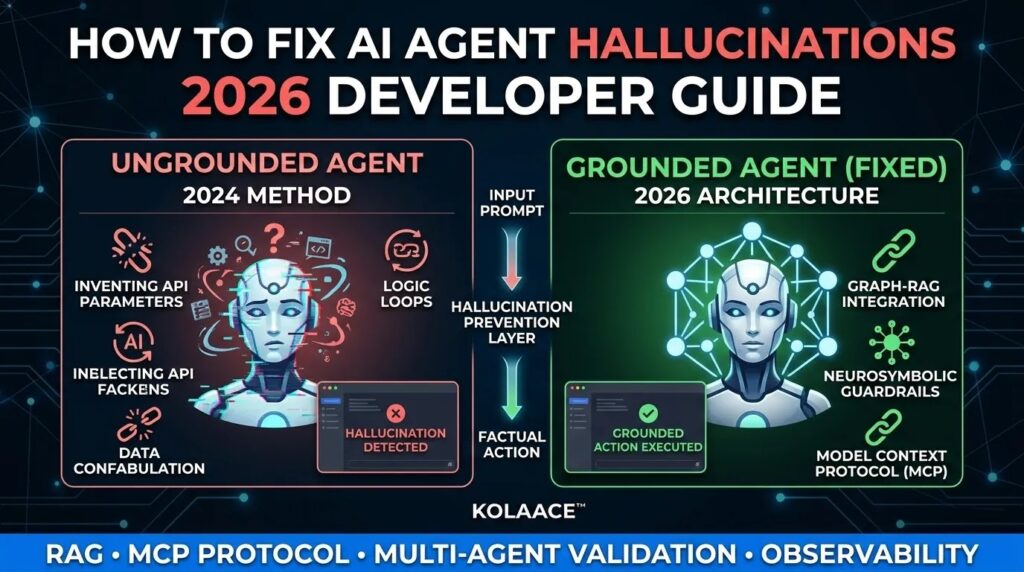

In 2026, the definition of an “AI Hallucination” has evolved. It is no longer just a chatbot claiming that “George Washington invented the Internet.” In the world of autonomous agents, a hallucination is a logic failure—an agent incorrectly assuming it has the permission to delete a database or misinterpreting a tool’s API parameters.

As we scale KOLAACE™ into a high-traffic authority, understanding the “Nervous System” of AI agents is critical. If your custom agents are “hallucinating” actions, you aren’t dealing with a creative model; you are dealing with a grounding deficit. This guide explores the four advanced architectures used in 2026 to achieve 99.9% factual accuracy.

The Cost of Hallucination in 2026

Hallucinations in 2026 aren’t just annoying; they are expensive. A single ungrounded agentic loop can exhaust thousands of tokens in seconds or execute unauthorized financial transactions.

| Error Type | The “Hallucination” | The 2026 Solution |

|---|---|---|

| Parameter Drift | Agent invents non-existent API arguments. | Semantic Tool Selection |

| Logic Loops | Agent repeats failed steps indefinitely. | Reflection & Traceability |

| Data Confabulation | Agent fills missing DB data with “guesses.” | Graph-RAG Integration |

Market Growth: AI Accuracy Demand

As autonomous systems move into regulated sectors (Finance, Healthcare, Legal), the market for “Hallucination Detection” tools is exploding.

Enterprise Spending on AI Guardrails (2024-2026)

*Projected investment in AI safety, grounding, and observability software.*

4 Advanced Techniques to Stop Hallucinations

1. Graph-RAG (Retrieval-Augmented Generation)

Traditional RAG relies on “Vector Search,” which often retrieves text chunks that are relevant but factually incomplete. Graph-RAG maps relationships between entities (e.g., Customer -> Order -> Shipping Status). By using a Knowledge Graph, the agent doesn’t “guess” the relationship; it follows a hard-coded edge in a database.

2. Neurosymbolic Guardrails

This is the “Logic Police” for your agent. Instead of letting the LLM decide how to use a tool, you wrap your tool in a Symbolic Layer.

- Example: If an agent tries to call

book_hotel(price=0), the guardrail rejects the call before it ever reaches the API, forcing the LLM to re-evaluate its logic.

3. The Model Context Protocol (MCP)

Announced by Anthropic and adopted by the industry in late 2024/2025, **MCP** is now the 2026 standard. It provides a universal “nervous system” for agents. It prevents “Context Hallucination” by ensuring the agent only sees the tools and data relevant to the current sub-task, preventing “Choice Overload.”

4. Multi-Agent Cross-Validation

Never trust a single agent for high-stakes work. In 2026, we use a “Judge-Agent” architecture.

- Agent A: Performs the task.

- Agent B (The Verifier): Checks the output against the source documentation. If Agent B finds a discrepancy, the process is rolled back.

Top Hallucination Detection Tools of 2026

- Galileo Luna-2: Real-time protection with sub-200ms latency for content blocking.

- Maxim AI: Best for end-to-end lifecycle simulation before production.

- DeepEval (Open Source): An “AI Pytest” framework with over 30 built-in metrics for groundedness.

— KOLAACE™ Engineering

Final Verdict: Accuracy is the New Speed

Fixing hallucination errors is the difference between an AI toy and an enterprise-grade AI tool. By implementing Graph-RAG and MCP, you ensure your custom agents are grounded in reality, ready for the high-traffic demands of the 2026 web.