Many AI agent projects work perfectly during demos and fail the moment real users interact with them. A support agent invents refund policies. An automation bot triggers the wrong workflow. A research assistant cites data that does not exist. These are not harmless chatbot mistakes. In production systems, hallucination errors create operational risk, customer distrust, and expensive debugging sessions.

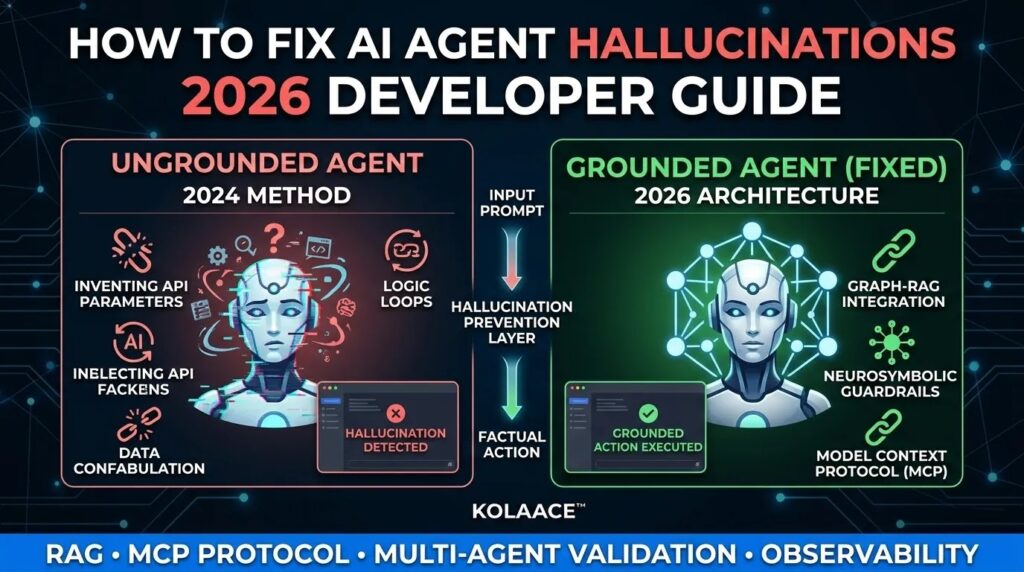

In 2026, developers building custom AI agents are expected to treat hallucination prevention as part of core infrastructure design. Prompt engineering alone is no longer enough. Reliable agents require validation layers, controlled memory, grounded retrieval, and clear execution boundaries.

After testing multiple autonomous workflows across customer support, eCommerce automation, and SaaS dashboards, one pattern becomes obvious. Most hallucinations happen because the system gives the model too much freedom without verification.

This guide explains practical ways to reduce hallucination errors in custom AI agents using production-focused techniques that developers can implement today.

What Are Hallucination Errors in AI Agents

Hallucination errors occur when an AI agent generates outputs that sound valid but are incorrect, fabricated, or unsafe. In conversational systems this may appear as a fake answer. In autonomous agents, the consequences are far more serious because the system can take actions automatically.

From production logs and testing environments, hallucinations usually fall into four categories:

- Fabricated information: The model invents facts, IDs, prices, or references.

- Invalid tool execution: The agent calls tools with incorrect parameters or unsupported actions.

- Reasoning drift: The agent moves away from the original task and starts unrelated operations.

- False confidence: The system presents uncertain outputs as verified facts.

These failures become dangerous in systems connected to databases, payment tools, admin dashboards, or customer records.

Why Hallucination Errors Become Worse in Autonomous Agents

Traditional chatbots only generate text. Modern AI agents perform actions. They search databases, execute workflows, send emails, update records, and interact with external APIs. This changes the risk level completely.

In real deployment scenarios, one incorrect output can trigger a chain of automated failures.

| Failure Scenario | What the Agent Does | Business Impact |

|---|---|---|

| Incorrect API Call | Submits invalid parameters to backend systems | Failed transactions or corrupted records |

| Invented Customer Data | Creates fake order or account details | Loss of trust and support escalations |

| Looping Behavior | Repeats the same reasoning cycle endlessly | High infrastructure cost and system slowdown |

| Wrong Workflow Decision | Executes unintended automation steps | Operational and financial damage |

Small businesses are especially vulnerable because they often connect AI agents directly to customer operations without extensive testing infrastructure.

Common Causes of AI Agent Hallucinations

Poor Data Grounding

If the model does not have access to verified information, it fills gaps with probability-based guesses. This is one of the biggest causes of fabricated outputs.

Overloaded Context Windows

Giving the agent excessive instructions, documents, or conversation history often reduces accuracy instead of improving it. Important signals get diluted.

Weak Tool Definitions

Ambiguous API documentation and poorly structured tool schemas confuse the agent during execution.

Missing Validation Layers

Many developers allow the model to execute outputs directly without checking whether they are logically or technically valid.

High Creativity Settings

Production systems using high temperature settings increase randomness. This may help brainstorming tools but creates instability in operational agents.

Step by Step Framework to Fix Hallucination Errors

Step 1: Ground the Agent with Reliable Data Sources

Do not expect the model to remember business logic or factual information accurately. Connect the agent to structured sources such as:

- SQL databases

- Vector databases

- Knowledge bases

- Internal documentation systems

- Verified APIs

In testing environments, retrieval-based architectures reduce hallucinations significantly because the model references actual data before generating responses.

Step 2: Validate Every Tool Call Before Execution

Never allow raw model outputs to execute automatically.

Create a validation layer that checks:

- Required parameters

- Allowed actions

- Data types

- Permission levels

- Rate limits

For example, if an agent attempts to refund an order that does not exist, the validation layer should block execution immediately.

Step 3: Reduce Context Noise

Developers often assume more context improves intelligence. In practice, overloaded prompts increase confusion.

Instead:

- Inject only task-relevant information

- Use short memory windows

- Summarize long conversations

- Separate instructions from retrieved data

This approach improves reasoning consistency and reduces execution drift.

Step 4: Add Output Verification

One effective production strategy is using a second verification layer.

This can be:

- A secondary AI verifier agent

- A rule-based validation engine

- A deterministic logic checker

In enterprise systems, verification layers are often used to compare generated outputs against original source data before execution.

Step 5: Implement Human Approval for Sensitive Actions

Not every workflow should be fully autonomous.

Require manual approval for:

- Financial operations

- Database deletion tasks

- Admin permission changes

- Legal or compliance responses

This hybrid model is commonly used in production-grade AI operations because it balances automation with safety.

Step 6: Build Observability and Logging

If you cannot trace an agent decision, you cannot debug hallucinations effectively.

Track:

- User inputs

- Retrieved documents

- Prompt versions

- Tool calls

- Execution paths

- Error states

Detailed logs help developers identify whether failures come from retrieval issues, reasoning problems, or broken integrations.

Advanced Techniques Used by Production AI Teams in 2026

Graph-Based Retrieval Systems

Instead of simple keyword search, graph retrieval connects entities and relationships. This reduces incorrect assumptions because the agent understands how data points relate to each other.

Schema-Constrained Outputs

Force the model to generate structured JSON or predefined formats instead of free-form text. This dramatically improves tool reliability.

Self-Reflection Loops

Some systems ask the model to review its own output before execution. This does not eliminate hallucinations entirely, but it helps detect obvious contradictions.

Low Temperature Execution Modes

For operational agents, temperatures near 0.0 provide more stable and predictable outputs.

Tool Permission Isolation

Separate high-risk tools from low-risk tools. For example, a support agent may read customer records but should not delete them.

KOLAACE Engineering Insight

Real World Use Cases Where Hallucination Prevention Matters

Customer Support Automation

AI support agents often hallucinate refund policies, shipping details, or account data. Adding retrieval systems connected to live policy databases improves reliability significantly.

eCommerce Operations

Order management agents can accidentally trigger duplicate refunds or incorrect stock updates. Validation layers protect operational workflows.

SaaS Analytics Platforms

Dashboard assistants sometimes generate incorrect metrics when they cannot access complete data. Schema validation and controlled queries reduce this problem.

Internal Enterprise Assistants

Organizations using AI for internal documentation search need strict grounding to avoid misinformation inside operational teams.

Pros and Limitations of Current Hallucination Solutions

Advantages

- Improves reliability in production systems

- Reduces operational risk

- Builds user trust

- Creates safer automation pipelines

- Improves debugging and monitoring

Limitations

- Requires additional engineering effort

- Can increase infrastructure complexity

- Verification layers add latency

- Monitoring systems require continuous maintenance

Despite these tradeoffs, production-grade AI systems cannot operate safely without these controls.

Who Should Prioritize Hallucination Prevention

Strongly recommended for:

- SaaS startups building AI workflows

- Developers deploying autonomous agents

- Businesses automating customer operations

- Teams handling sensitive financial or personal data

- API-driven AI platforms

Lower priority for:

- Simple hobby projects

- Creative writing bots

- Non-critical experimental tools

Best Practices for Long Term AI Agent Stability

- Start with narrow task scopes before expanding autonomy

- Test edge cases aggressively before deployment

- Use structured outputs wherever possible

- Separate reasoning from execution layers

- Monitor token usage and looping behavior

- Regularly retrain retrieval pipelines and documentation sources

- Review failure logs weekly instead of waiting for incidents

One practical lesson from production deployments is that small improvements in validation often reduce hallucination rates more effectively than switching to a larger model.

Conclusion

Fixing hallucination errors in custom AI agents requires more than better prompts. Stable systems are built through layered architecture, verified retrieval, strict validation, and continuous monitoring.

Developers who treat hallucination prevention as an engineering problem instead of a model problem build agents that are safer, more predictable, and easier to scale. As AI agents become more autonomous in 2026, reliability will become one of the biggest competitive advantages for any platform using AI in production.